Deepfake evidence is infiltrating courtrooms, and most legal teams don’t have a game plan for handling it. In a recent webinar, we brought together a U.S. District Judge and leading forensic and legal experts to reveal exactly how to challenge, authenticate, and detect deepfake evidence in litigation before it undermines your case.

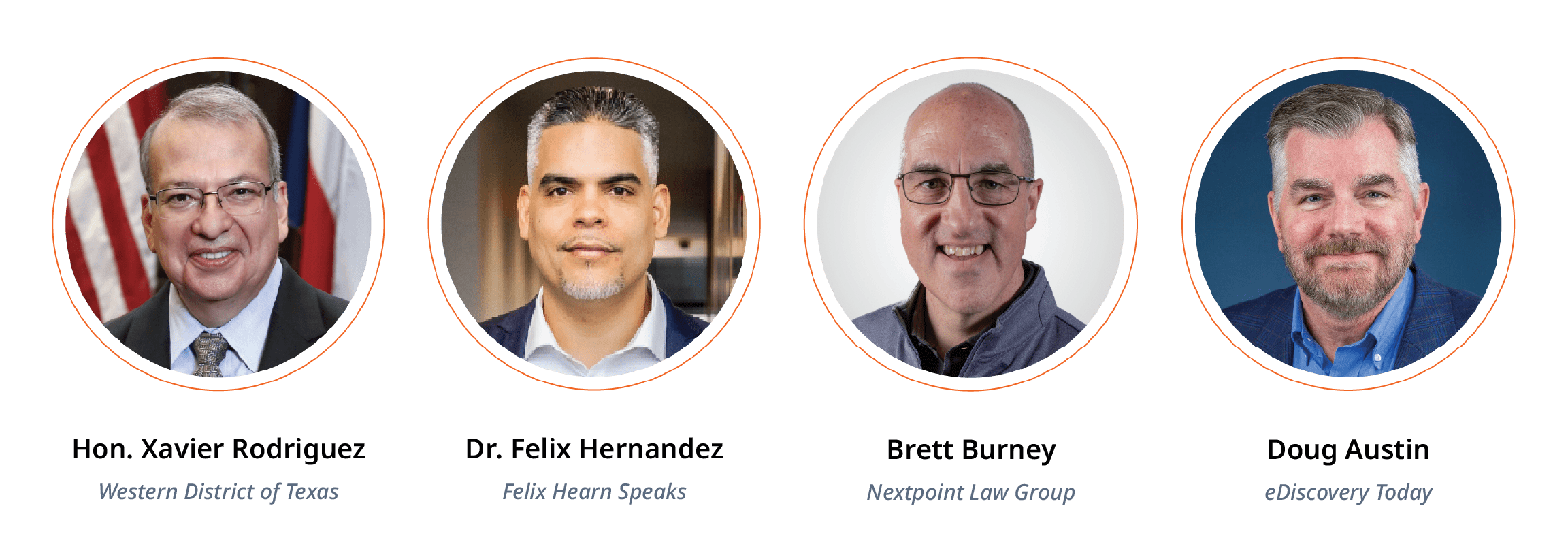

The Expert Panel

In a recent webinar with EDRM, we assembled an exceptional panel to tackle one of the most pressing challenges facing litigation today: detecting deepfake evidence.

Together, they broke down exactly what legal teams need to know about AI-generated audio, video, and images showing up as evidence — and how to handle them.

What are Deepfakes?

Deepfakes are AI-generated or AI-manipulated images, videos, audio, or documents designed to appear real. Using advanced machine learning, these materials can convincingly mimic a person’s voice, likeness, or actions, often making them difficult to distinguish from authentic evidence at first glance.

In the context of litigation, deepfakes pose a serious risk. Fabricated or altered evidence can create confusion, undermine credibility, and jeopardize the integrity of a case. As courts grow more aware of AI-generated content, legal teams must take extra care to verify the authenticity of the evidence they submit. Failing to do so may result in evidentiary challenges, sanctions, or other serious consequences.

Why Deepfakes Are Different This Time

Manipulating evidence isn’t new. Judge Rodriguez opened the discussion by pointing out examples throughout history. Stalin airbrushing colleagues out of photographs, 19th-century portraits with swapped heads. We’ve been fabricating and altering evidence for centures.

But something fundamental has changed…

“We’ve been fabricating, altering, modifying evidence for a long time,” Judge Rodriguez stated. “The difference now is accessibility.”

Today, the power to create convincing fake evidence sits in everyone’s back pocket. AI tools draw from massive wells of data (millions of images, hours of audio) to generate realistic content that can fool even trained observers.

Nothing drives this point home more dramatically than Dr. Hernandez appearing on camera as George Clooney, then switching to Eddie Murphy in real-time. “It goes beyond the old way of photoshopping,” he explained. “You can swap your face on video. You can change your voice and even your language in split seconds.”

See the technology in action as Dr. Hernandez switches identities in real time below.

The Growing Threat of Litigation

Doug Austin walked the panel through several real-world cases where deepfakes have already caused serious harm:

The Baltimore Principal Case: A high school athletic director created an AI-generated audio clip of his principal making racist remarks and spread it on social media. The principal faced severe backlash, received threatening messages, required police protection at his home, and had to step away from his duties. He still hasn’t returned to his position. The athletic director was sentenced to four months in jail, and the principal has sued the school district for failing to correct the record.

Pennsylvania Private School Case: A single student reportedly created sexually explicit AI images of nearly 50 female classmates. Similar incidents are becoming disturbingly common.

California Superior Court Case: Judge Victoria Kerilowske issued terminating sanctions against a party who submitted obviously fake evidence — deepfake evidence with robotic movements, mismatched audio, and images that combined colored photographs superimposed on black-and-white backgrounds. The evidence was so poorly fabricated that Judge Kerilowske conducted her own investigation. In her order, she provided detailed examples of the manipulation (pointing out specific flaws like the color/black-and-white inconsistencies and audio sync issues) and uploaded the disputed videos to Google Drive to preserve them as part of the court record, ensuring they’d remain accessible for review even after the case concluded.

Doug noted, “Fortunately, these deepfakes weren’t very good. But I expect we’ll see more cases with a lot better quality deepfake videos and images and audio files to come.”

There’s No Silver Bullet for Detection

When it comes to detecting deepfake evidence in litigation, the panel was unanimous on one critical point: There is no single definitive test.

Dr. Hernandez demonstrated this by showing two nearly identical images: one real, one AI-generated. Brett guessed correctly (barely), but admitted it was essentially a coin flip. “The more we get people to view this image,” Dr. Hernandez explained, “the more they’ll get to that percentage that I provided in the survey,” where only 0.1% of people can accurately detect deepfakes, even when they know they’re looking for one.

Instead of relying on a single indicator, detecting deepfake evidence requires triangulation and combining multiple signals:

- Temporal inconsistencies (events happening out of sequence)

- Compression artifacts (unusual visual distortions or patterns that appear when files are manipulated and re-saved multiple times)

- Audio-video sync errors (mismatches between sound and picture)

- Biometric anomalies (unnatural eye movements, breathing patterns, facial features)

Dr. Hernandez demonstrated AI detection tools like Deepfake-o-meter from the University of Buffalo, which uses multiple models to analyze media. But he was careful to note the limitations.

“Each one of them has very different mechanisms to detect a deepfake. You’re going to have to do a lot of research to determine which one is going to give you the results that you are comfortable with.”

Perhaps most importantly, he warned that AI detection tools themselves have disclaimers in their usage policies stating they won’t provide 100% certainty.

Preservation is Everything

Brett emphasized a critical point of the discussion: If you don’t preserve the original file properly, you may destroy the very evidence you need to prove manipulation.

Doug outlined essential ediscovery protocols for handling potentially manipulated media:

- Collect native files (not screenshots or re-encoded versions)

- Preserve chain-of-custody logs meticulously

- Require production of metadata: file system metadata, embedded metadata like EXIF data, codec data for videos

- Avoid operations that re-encode or re-wrap files (this can destroy forensic traces)

- Capture checksums immediately

Checksums are small-sized blocks of data derived from another block of digital data for the purpose of detecting errors that may have been introduced during its transmission or storage.

“Production of native files is critical,” Doug stressed. “For audio, that’s WAV or AIFF files. For video, that’s MOV files. For images, JPEGs, PNGs with EXIF data if applicable.”

Nextpoint is designed with these preservation requirements in mind, automatically capturing native files with their original metadata and timestamps intact. The system maintains detailed audit trails throughout the entire lifecycle of evidence, which is exactly the kind of documentation you’ll need if you’re challenging or authenticating disputed media.

The Legal Framework: Still Evolving

Judge Rodriguez walked the panel through the current state of proposed rules for handling AI-generated evidence, noting that as of January 2026, the Federal Rules of Evidence Committee hasn’t adopted anything yet.

For acknowledged AI evidence (where parties know AI was used), Professor Maura Grossman and Judge Andrew Grimm have proposed requiring the proponent to demonstrate the validity, reliability, and lack of bias in the AI system before evidence is admitted. Judge Rodriguez continued, “That would seem to be flipping the burden. We’ve never done that in the law before.”

For unacknowledged AI evidence (deepfakes where one party claims it’s genuine and the other claims manipulation), several proposals are circulating:

- Establish higher authentication standards beyond merely using a witness

- Have judges rather than juries decide authenticity questions

- Require the challenging party to produce evidence supporting their claim of fabrication

Judge Rodriguez expressed concern about the practical implications. “How much evidence are we going to need? How many times is Dr. Hernandez going to be going all around the country testifying? Do we have enough experts? How much are they all going to cost? The system is already bloated with expenses.”

He also raised a fundamental question about jury trials. “I’m not any smarter than the 12 people in the jury when it comes to evaluating whether images have been distorted. I’m more concerned about the effect on juries and jury trials.”

Detection Tools and Techniques

Dr. Hernandez recommended several practical strategies for detecting deepfake evidence.

Monitor Digital Presence: Understanding what content already exists online about key individuals in your case is crucial for detection. “There’s a lot of content about us all over the internet. You can go on YouTube and find recorded content that somebody did 5 or 6 years ago. That content could then be used as the foundation of a deepfake.” By cataloging what source material is publicly available, you can better assess whether suspicious evidence could have been generated from those existing sources.

Invest in Training: Digital forensic specialists who are responsible for authenticating evidence will need extensive training in detecting deepfakes, including body language analysis, voice cadence recognition, and identifying facial structure anomalies that go beyond what automated detection systems can catch. That said, anyone on your legal team who handles evidence would benefit from learning to recognize telltale signs of AI manipulation, even if they’re not conducting formal forensic analysis.

Use Multiple Detection Tools: He demonstrated deepfakeometer.org, which provides a directory of available detection tools with detailed descriptions of their effectiveness. He particularly highlighted Sensity, a real-time detection tool that works during video conferences: “When you jump on a session, the tool will alert you with indicators that the voice is AI, the image is AI. It does a really good job.”

Collaborate Across Disciplines: “We have to do a lot of collaboration and information sharing across many different verticals, even outside the legal space.”

Practical Strategies for Legal Teams

Proactive Measures

- Establish ESI protocols that specifically address potentially manipulated media

- Include Meet and Confer requirements if there’s reasonable suspicion of deepfakes

- Require parties to disclose AI-generated content produced in discovery

- Mandate production of native files for all audio, video, and image evidence

- Train team members on red-flag indicators

Reactive Measures

- Don’t hesitate to challenge suspected deepfakes, even if you lack technical expertise

- Use discovery to investigate the provenance of suspicious media: What device was used? Where is that device now? Can you produce it?

- Engage experts early, choose specialists who can both analyze evidence and explain findings clearly to judges and juries

- Understand your ethical obligations regarding the disclosure

Judge Rodriguez offered an optimistic perspective: “I’m thinking a lot of this is going to work itself out. For a lot of us, we’re all concerned that this is going to hit the witness box in a trial in front of a jury right off the bat, and that’s probably not how it’s going to happen. There’s going to be discovery. People are going to ask questions.”

But he acknowledged the challenges ahead: “For really important stuff that sophisticated parties have spent some amount of money on creating a deepfake, and we have competing experts, I’m not sure what a judge is going to do with that.”

The Nextpoint Advantage

Throughout the discussion, Brett highlighted how Nextpoint’s cloud-based platform addresses many of the challenges inherent in detecting deepfake evidence in litigation:

- Automatic preservation of native files with original metadata and timestamps

- Comprehensive audit trails throughout the evidence lifecycle

- Easy collaboration with forensic experts who need access to native files

- Visual presentations tools for creating side-by-side comparisons and timeline graphics

- Security and chain of custody protections built into the platform

“The time to think about these workflows is now, not when you’re in the middle of a case with disputed evidence,” Brett emphasized. “Legal teams should validate their preservation processes with test collections to ensure everything is working correctly before they’re dealing with actual case materials.”

Key Takeaways

As Dr. Hernandez concluded the session, he emphasized the importance of continuous learning.

“Attending conferences like this, sessions like this on an ongoing basis, maybe gaining some official training from a reliable source can go a long way. You may not have known that deepfakes went beyond photoshopping or evolved beyond photoshopping. It goes way beyond that now.”

Deepfakes represent an accelerating risk that legal teams can no longer afford to ignore. But with the right combination of technical knowledge, proper ediscovery protocols, strategic litigation tactics, and the right tools, deepfake evidence can be detected and challenged effectively.

The key is preparation. Build your tools now, identify your experts, train your team, and equip them with platforms like Nextpoint that preserve the forensic integrity required for today’s complex cases.